Practical question-answering systems often use a technique called answer selection. Given a question — say, “When was Serena Williams born?” — they perform an ordinary, keyword-based document search, then select one sentence from the retrieved documents to serve as an answer.

Today, most answer selection systems are neural networks trained on questions and sets of candidate answers: given a question, they must learn to choose the right answer from among the candidates. During operation, they consider each candidate sentence independently and estimate its chance of being the correct answer.

But this approach has limitations. Imagine an article that begins “Serena Williams is an American tennis player. She was born on September 26, 1981.” If the system has learned to consider candidate answers independently, it will have no choice but to assign “September 26, 1981” a low probability, as it has no way of knowing who “she” is. Similarly, a document might mention Serena Williams by name only in its title. In this case, accurate answer selection requires a more global sense of context.

In a pair of papers we are presenting this spring, my colleagues and I investigate how to add context to answer selection systems without incurring overwhelming computational costs.

We are presenting the first paper at the European Conference on Information Retrieval (ECIR), at the end of this month. Ivano Lauriola, an applied scientist in the Alexa AI organization, and I will describe a technique for using both local and global context to significantly improve the precision of answer selection.

Three weeks later, at the Conference of the European Chapter of the Association for Computational Linguistics (EACL), Rujun Han, a graduate student at the University of Southern California who joined our team as intern in summer 2020; Luca Soldaini, an applied scientist in the Alexa AI organization; and I will present a more effective technique for adding global context, which involves vector representations of a few selected sentences.

By combining this global-context approach with the local-context approach of the earlier paper, we demonstrate precision improvements over the state-of-the-art answer selection system of 6% and 11% on two benchmark datasets.

Local context

In both papers, all of our models build upon a model we presented at AAAI 2020, which remains the state of the art for answer selection. That model adapts a pretrained, Transformer-based language model — such as BERT — to the task of answer selection. Its inputs are concatenated question-answer pairs.

In our ECIR paper, to add local context to the basic model, we expand the input to include the sentences in the source text that precede and follow the answer candidate. Each word of the input undergoes three embeddings, or encodings as fixed-length vectors.

One is a standard word embedding, which encodes semantic content as location in the embedding space. The second is a positional embedding, which encodes the word’s location in its source sentence.

The third is a sentence embedding, which indicates which of the input sentences the word comes from. This enables the model to learn relationships between the words of the candidate answer and those of the sentences before and after it.

We also investigated a technique for capturing global context, which used a 50,000-dimension vector to record counts for each word of a 50,000-word lexicon that occurred in the source text. We use a technique called random projection to reduce that vector to 768 dimensions, the same size as the local-context vector.

In tests, we compared our system to the state-of-the-art Transformer-based system, which doesn’t factor in context, and an ensemble system that uses a separate encoder for each answer candidate and each of the sentences flanking it. The ensemble system baseline allowed us to measure how much of our model’s success depended on the inference of relationships between adjacent sentences, as opposed to simple exploitation of the additional information they contain.

On three different datasets and two different measures of precision, our model outperformed the baselines across the board. Indeed, the ensemble system fared much worse than the other two, probably because it was confused by the additional information in the contextual sentences.

Global context

In our EACL paper, we consider two other methods for adding global context to our model. Both methods search through the source text for a handful of sentences — two to five worked best — that are strongly related to both the question and the candidate answer. These are then added as inputs to the model.

The two methods measure relationships between sentences in different ways. One uses n-gram overlap. That is, it breaks each sentence up into one-word, two-word, and three-word sequences and measures the overlaps between those sequences across sentences.

The other method uses contextual word embedding to determine semantic relationships between sentences, based on their proximity in the embedding space. In experiments, this is the approach that worked best.

In our experiments, we used three different architectures to explore our approach to context-aware answer selection. In all three, the inputs included both local-context information — as in our ECIR paper — and global-context information.

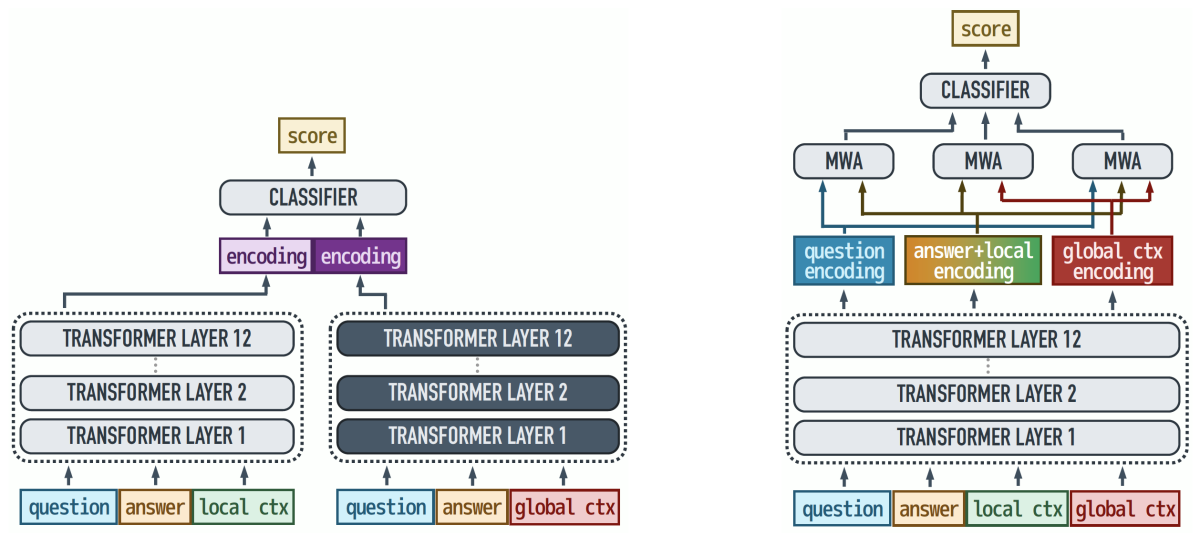

In the first architecture, we just concatenated the global-context sentences with the question, candidate answer, and local-context sentences.

The second architecture uses an ensemble approach. It takes two input vectors: one concatenates the question and candidate answer with the local-context sentences, and the other concatenates them with global-context sentences. The two input vectors pass to separate encoders, which produce separate vector representations for further processing. We suspected that this would increase precision, but at higher computational cost.

The third architecture used multiway attention to try to capture some of the gains of the ensemble architecture, but at lower cost. The multiway-attention model uses a single encoder to produce representations of all the inputs. Those representations are then fed into three separate attention blocks.

The first block forces the model to jointly examine question, answer, and local context; the second focuses on the relationship between local and global context; and the last attention block captures relations in the entire sequence. The architecture thus preserves some of the information segregation of the ensemble method.

And indeed, in our tests, the ensemble method fared best, but the multiway-attention model was close behind, suffering drop-offs of between 0.1% and 1% on the three metrics we used for evaluation.

All three of our context-aware models, however, outperformed the state-of-the-art baseline, establishing a new standard in answer selection precision.