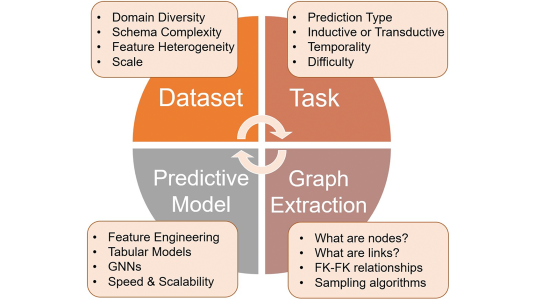

SageMaker is a service from Amazon Web Services that lets customers quickly and easily build machine learning models for deployment in the cloud. It includes a suite of standard machine learning algorithms such as k-means clustering, principal component analysis, neural topic modeling, and time series forecasting.

Last week at SIGMOD/PODS, the Association for Computing Machinery’s major conference on data systems, my colleagues and I described the design of the system that supports these algorithms.

The contexts in which cloud-based machine learning models operate are rarely static. Models often need updating as new training data becomes available or new use cases arise; some models are updated hourly.

Simply retraining a model on new data, however, risks eroding the knowledge the model has previously acquired. Retraining the model on a combination of both new and old data avoids this problem, but it can be prohibitively time consuming.

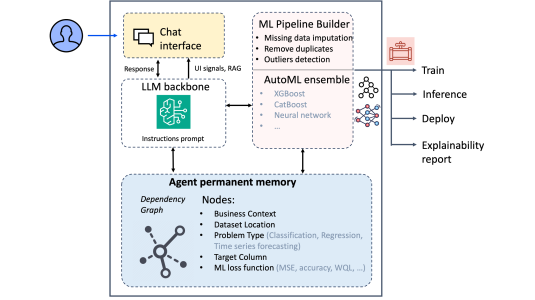

The SageMaker system design helps resolve this impasse. It also enables easier parallelization of model training and more efficient optimization of model “hyperparameters”, structural features of the model whose variation can affect performance.

In neural networks, for instance, hyperparameters include features like the number of network layers, the number of nodes per layer, and the network’s learning rate. The optimal settings of a model’s hyperparameters vary from task to task, and tuning hyperparameters to a particular task is typically a tedious, trial-and-error process.

Our system design addresses these problems by distinguishing between a model and the model state. In this context, the state is an executive summary of the data that the model has seen so far.

To take a trivial example, suppose that a model is calculating a running average of an incoming stream of numbers. The state of the model would include both the sum of all the numbers it’s seen and their quantity. If the model stores that state, then, when a new stream of numbers comes in the next week, it can simply continue to increment both values, without needing to re-add the numbers it’s already seen.

Of course, most machine learning models perform tasks that are more complex than simple averaging, and the information that the state must capture will vary from task to task: it could, for instance, include representative samples from the data it’s seen. With SageMaker, we’ve identified separate state variables for each of the machine learning algorithms we support.

One of the advantages of tracking state is model stability. The state is of fixed size: the model may see more and more data, but the state’s summary of the data always takes up the same space in memory.

This means that the cost of training the model, in both time and system resources, scales linearly with the amount of new training data. If training time scales superlinearly, a large enough volume of data could cause the training to time out and therefore fail.

The averaging example illustrates another facet of our system: it needs to operate on streaming data. That is, it may see each training example only once, and the sequence of examples may break off at any point. At any such breakpoint, it should be able to synthesize what it’s learned to produce a working, up-to-date model.

Distributed state

Our system supports this learning paradigm. But it also works perfectly well in the standard machine learning setting, where training examples are broken into fixed-size batches, and the model runs through the same training set multiple times until its performance stops improving.

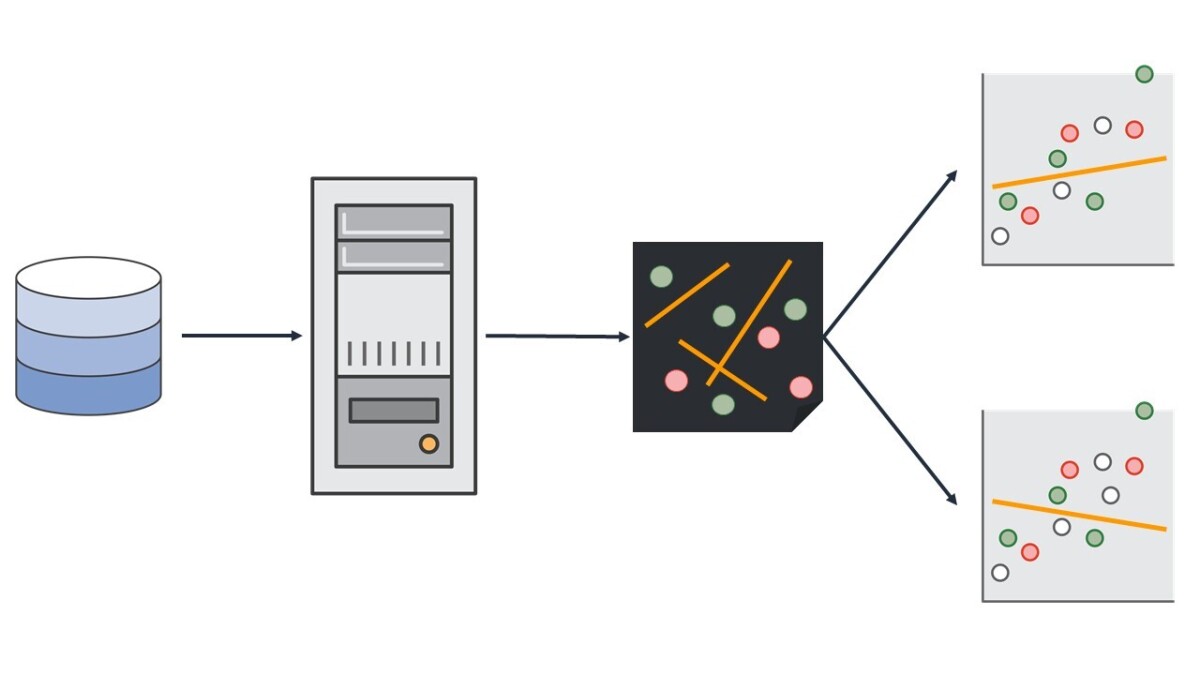

When the system trains a model in parallel, each parallel processor receives its own copy of the state, which it updates locally. To synchronize the locally stored state updates, we use an open-source framework called a parameter server.

The synchronization schedule is again algorithm specific. With k-means clustering and principal component analysis, for instance, a given processor doesn’t need to report its state update to the parameter server until it’s completed all its computations. With a neural network, whose training involves finding a global optimum, synchronization would need to occur much more frequently.

Just as the state’s data summaries enable efficient retraining of models, so they enable efficient estimates of the effects of different hyperparameter settings on the model’s performance. Hence SageMaker’s ability to automate hyperparameter tuning.

In the paper, we report the results of experiments in which we compared our system to some standard implementations of the same machine learning techniques.

We found that, on average, our approach was much more resource efficient. With the linear learner, for instance — an algorithm that learns linear models such as linear regressions and multiclass classification — our approach enabled an eight-fold increase in parallelization efficiency.

And with k-means clustering, a technique for clustering data points, our approach enabled a nearly 10-fold increase in training efficiency. Indeed, in our experiments, data sets larger than 100 gigabytes caused existing implementations to crash.